A discussion on AI’s role in the security industry focused on current and future applications of the technology at the Security Industry Association’s GovSummit event in Washington, D.C., this week.

Members of the panel agreed that current AI technologies have the potential to vastly improve the efficiency and effectiveness of current security operations.

“Previously standalone technologies are coming together in ways we have never seen before,” Todd Veazie of Kiernan Group Holdings said in his opening remarks. “These machines now see as we see and hear how we hear, allowing cameras and microphones to automate many tasks. These systems’ recognition abilities allow for new inferences about the nature of the environment and the choices we make to operate in it.”

Even amid that optimism, members of the panel wanted to cut through the hype surrounding AI technologies. Adam Ayotte-Savino, the senior manager of organizational effectiveness at ASIS, described the solutions being used today as “data-centric systems that are able to process large amounts of information to detect patterns and irregularities.”

The straightforward definition proved to be a useful anchor as the panelists discussed the dizzying capabilities such systems can offer end users.

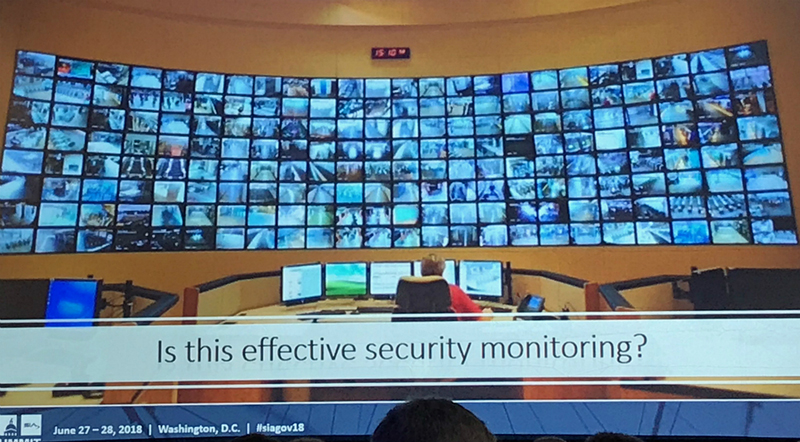

AI technologies can be a force multiplier for officials tasked with monitoring large amounts of data. (Photo via SIA’s GovSummit)

AI Opportunities in Security

David Sousa of the Federal Protective Service, which operates under the Department of Homeland Security, explained AI’s immediate role in the security industry by describing a situation familiar to anyone who’s been in an emergency operations center.

“[Organizations] have dozens of systems, each with multiple sensors,” Sousa said. “A security manager is tasked with interpreting all of that data flowing in. Right now that’s one unlucky guy.”

With AI and machine learning, Sousa says software can be combined with integrated audio and video analytics systems to make monitoring operations vastly more efficient.

“These systems provide data, but if we think of them as merely inputs to a technology that can make sense of all that information, we can get much more actionable data,” Sousa explained. “That means we can have monitors that only get alerts when several different sensors have decided an event needs to be looked at.”

Sousa believes the sensing technology and computing power is already advanced enough to make AI useful.

“We have sensors that can tell me who in this room has a fever or who is acting suspiciously,” Sousa said. “That’s where we start to look at AI and machine learning to provide better information.”

The panelists also dispelled fears of mass job displacement, telling the audience that AI will augment security officers more than it will replace them.

Limits to AI’s Advance into the Security Industry

Members of the panel also listed several features that officials need to incorporate into their security infrastructure in order to get the full value of AI on their campuses.

They listed systems integration, user education and data collection as requirements for AI technologies to bring their full value to security operations.

“We need to implement these technologies so that they focus on where we need them to focus,” Sousa said. “[Organizations] also need to connect their disparate systems in order for AI to maximize efficiencies.”

Additional considerations include risks such as privacy concerns around data collection, deploying systems that aren’t ready and hacking AI-based systems.

“[With autonomous vehicles], we’ve seen someone put stickers on street signs that convince the self-driving cars to blow right through any stop sign,” Ayotte-Savino noted. “A sticker can actually hack some of these systems.”

To navigate these risks, Sousa speculated that some common standards or government regulations may be adopted in the near future.

“It’s a little bit of an unknown from my standpoint, but as these technologies advance, there’s going to need to be some semblance of control over them,” he said.

Overall, the panel encouraged organizations to consider the risks involved with these systems long before they implement them.

“You’ll always need a degree of human interaction, and that comes back to education,” Ayotte-Savino said. “But if you don’t understand and think about the limitations of AI in security, you’re in trouble.”

The Road Forward for AI in Security

Many systems, particularly in the video surveillance realm, already offer some form of machine learning on their devices or through cloud services.

Christian Morin, the vice president of cloud services at Genetec who spoke on a separate panel, noted earlier in the day that cloud services have exploded onto the scene in the security industry recently and are maturing rapidly.

But Sousa says most of the AI technology incorporated into systems today are watered-down versions of what’s to come.

“We’re already seeing some basic AI, but most of these systems are very rules-based, they give you yes or no answers,” Sousa explained. “Now we’re starting to look at the normal environment and determining who’s exhibiting any abnormal behavior; that’s not rules-based, that’s learned.”

Security officials can’t simply buy an AI system and step back. First, they need to invest in the sensors, personnel training and integration to make the technology work.

But, based on the panelists’ predictions, that effort will be worth it in the near future.